I’m following the instructions to create the Fivetran connection in the Airflow Connections part of the UI, which seem fairly straightforward. However, I tried editing it, and just by going through this it took me through the instance creation pages with what I had previously filled in. Turns out, not so much… I’d have to delete my instance and create a new one. Now I’m not entirely sure what to do… I’m hoping there will be an easy way to restart the Airflow instance and therefore, I assume, refresh the code used to run it from the bucket. Upload new file to the s3 bucket with the code, done. My recollection of this with dbt cloud is that you can change the dbt version of an environment by editing it in the UI (which is good, although I know some people would like to terraform this) and by making a PR to change the package version in the dbt_requirements.yml file (which is also OK, but I’d rather all packages were managed within the environment of the UI with the dbt version, as it could then guarantee compatibility before trying to use or develop in the environment).Īnyhow, back to the Metaplane Airflow repo and a commit to put these providers in the file there. This makes me wonder about the upgrade process for Airflow and Packages on MWAA… Would you have to destroy the instance and recreate after changing the code.? This doesn’t seem particularly graceful, or something I’d want to manage. I assume it will either error or just always take the latest version when the instance is started, if I don’t specify. It’s possible I may need to specify a version in the form of =2.1.0 or similar, after the string for the provider name in the requirements.txt file. Right, back on topic! The Airflow-dbt-Python provider, shared by Dani, has instructions for installation! Even with a section for MWAA! As suspected, you need to change the requirements.txt file to include the name of the provider.įrom Astronomer registry , it looks as though the name of the Fivetran provider should be Airflow-provider-Fivetran and the dbt cloud one should be Apache-Airflow-providers-dbt-cloud. This is a whole ecosystem in its infancy - look ahead at the Software Engineering ecosystem. It’s all of the above, working together, chipping away at the same problems until they’re solved. Not just practitioners hacking it together on their own

I am intending to use dbt cloud, but if I were going to use dbt core, this would have been incredibly helpful - especially as it’s been well-tested with MWAA!Īs a tangent, this is why I believe that we will solve the problems in the Modern Data Stack, as a community: From seeing that there is a requirements.txt file, I’m guessing this is how, but it’s not that obvious to someone new to this kind of tool! I was hoping to find the equivalent of this page in the dbt docs.ĭani Sola - SVP of Data at Clark - kindly shared a link to an Airflow Provider for dbt that one of his team, plus some additional contributors, have made.

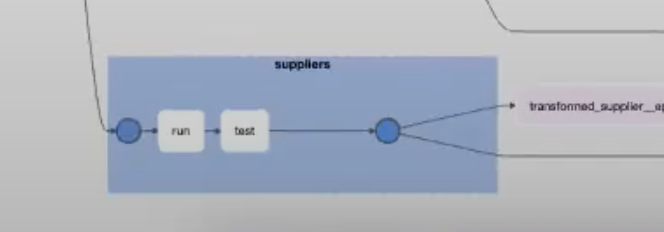

The links above mention providers that could be really helpful for me, as they will give me pre-built operators (plus hooks and sensors which I haven’t learned about yet) for Fivetran and dbt cloud.īut how do you install a provider in Airflow? Strange that these docs don’t actually tell you how, but just describe what is possible. Now that I’ve completed the parts of the course around operators and dependencies, it’s probably a good time to try and build some in Metaplane’s Airflow. This workflow would be very cumbersome for large DAGs that are maintained across bigger teams. I can see why companies using Airflow instead of dbt in the past usually didn’t have DAGs as large or as complex as those using dbt.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed